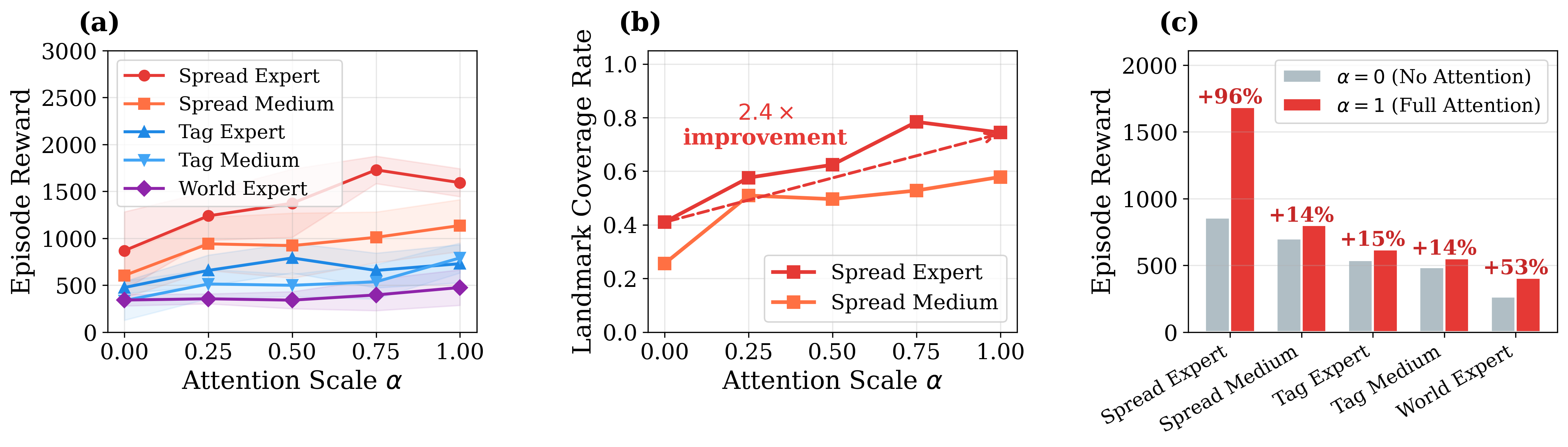

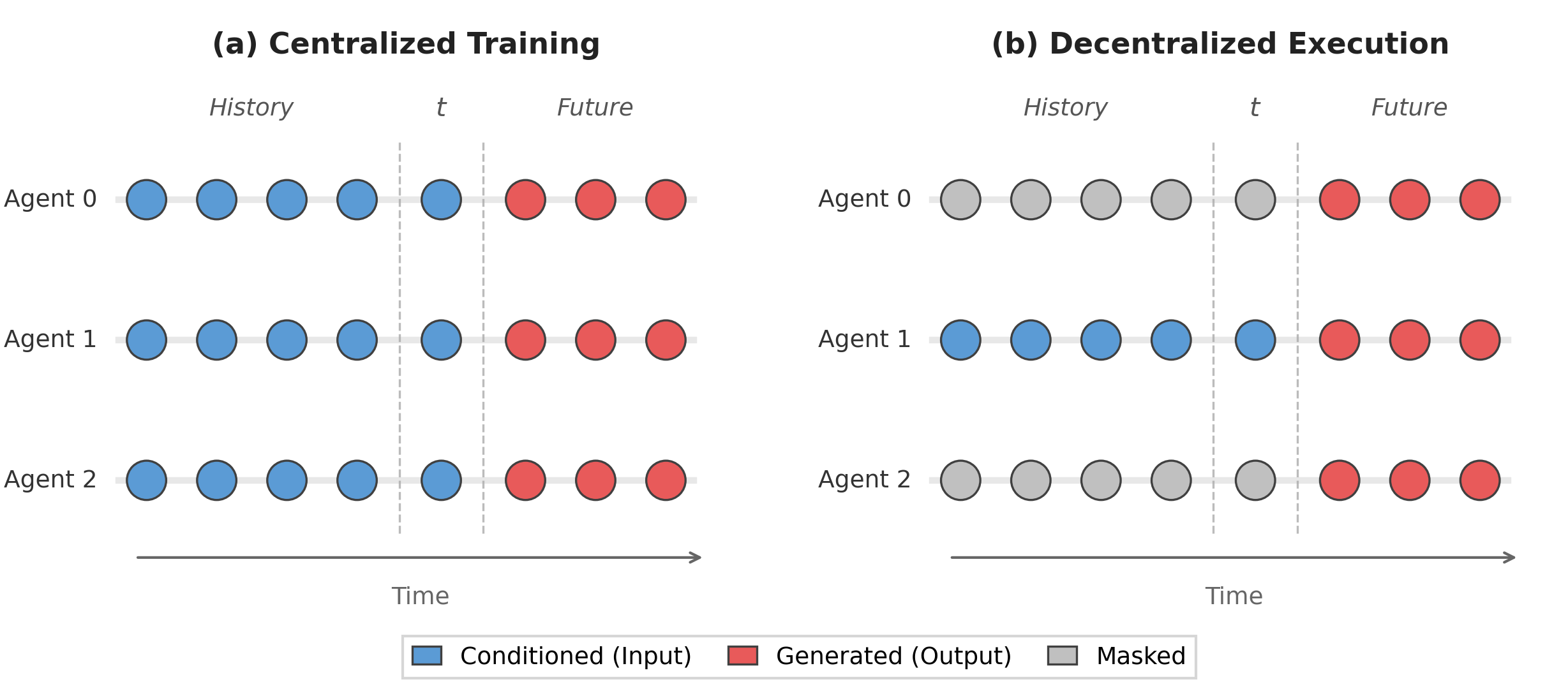

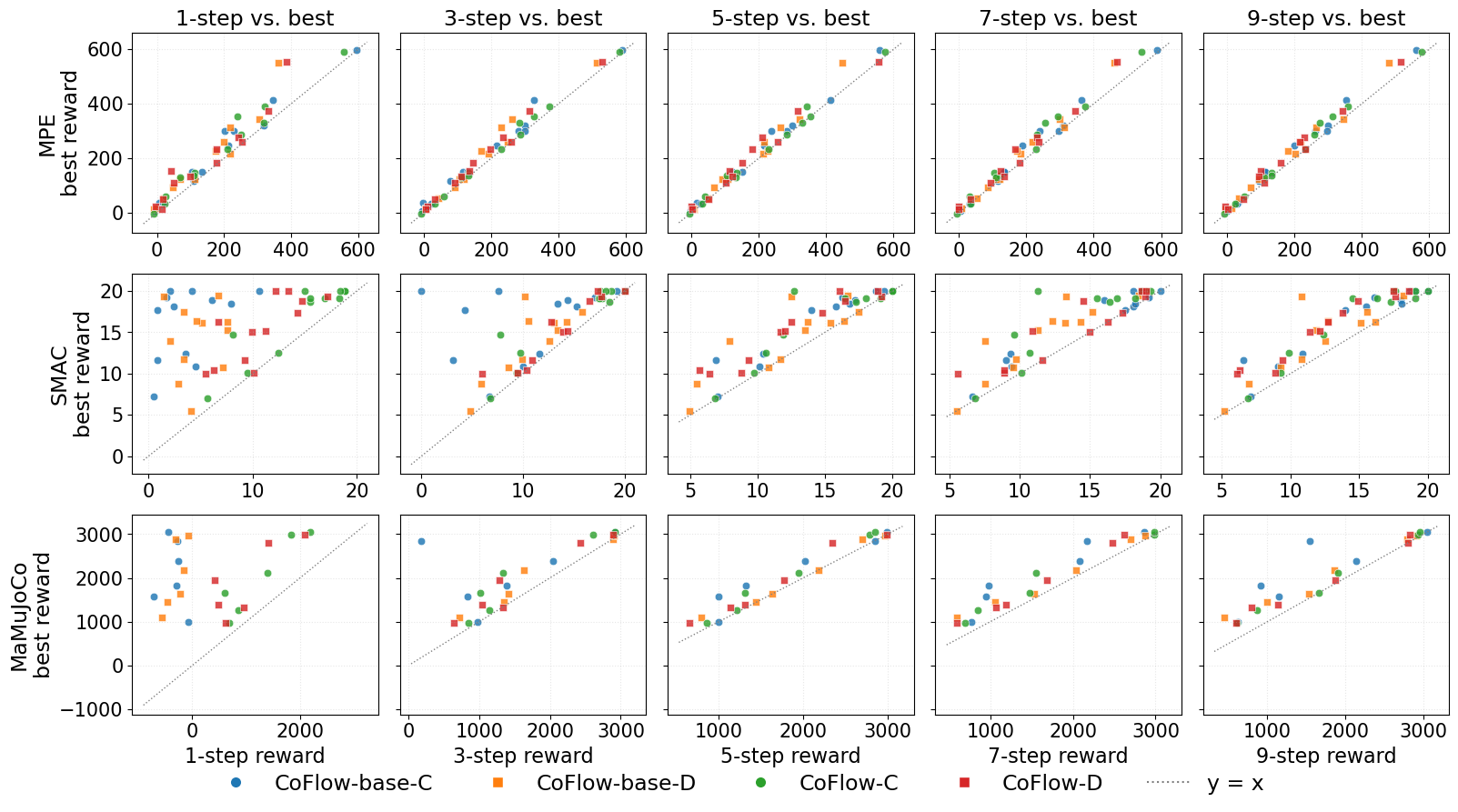

Answer: Yes. CoFlow-C is best on most table entries, while CoFlow-D remains competitive under decentralized execution. The gains are most visible on coordination-heavy MPE tasks.

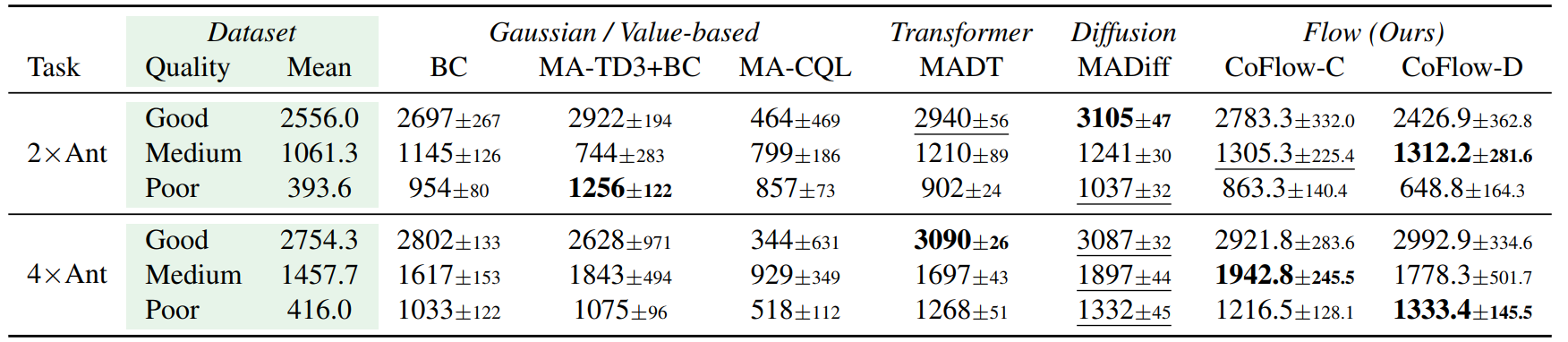

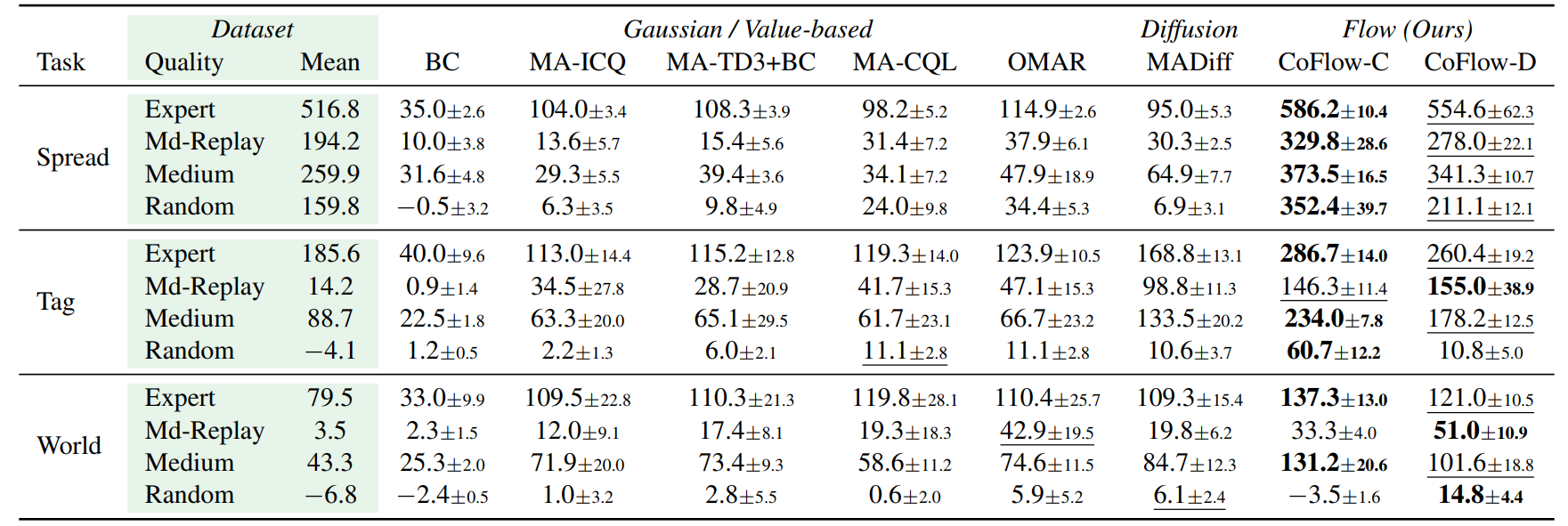

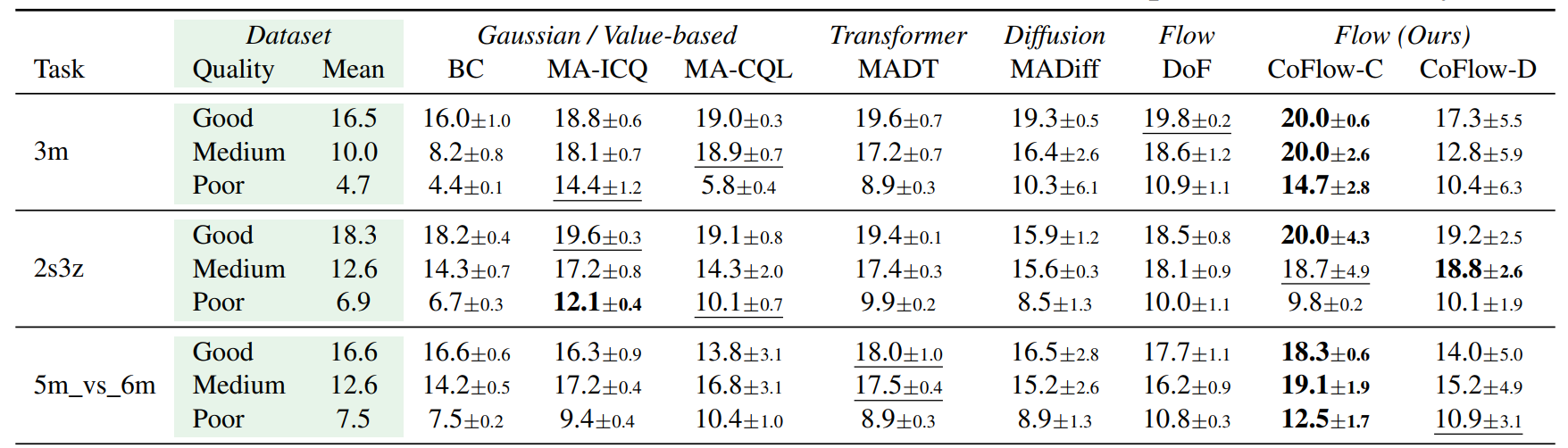

Main Result Tables

Full result tables are inserted as screenshots. Bold marks the best method in each row, underline marks the second-best method, and tables scroll horizontally on narrow screens.

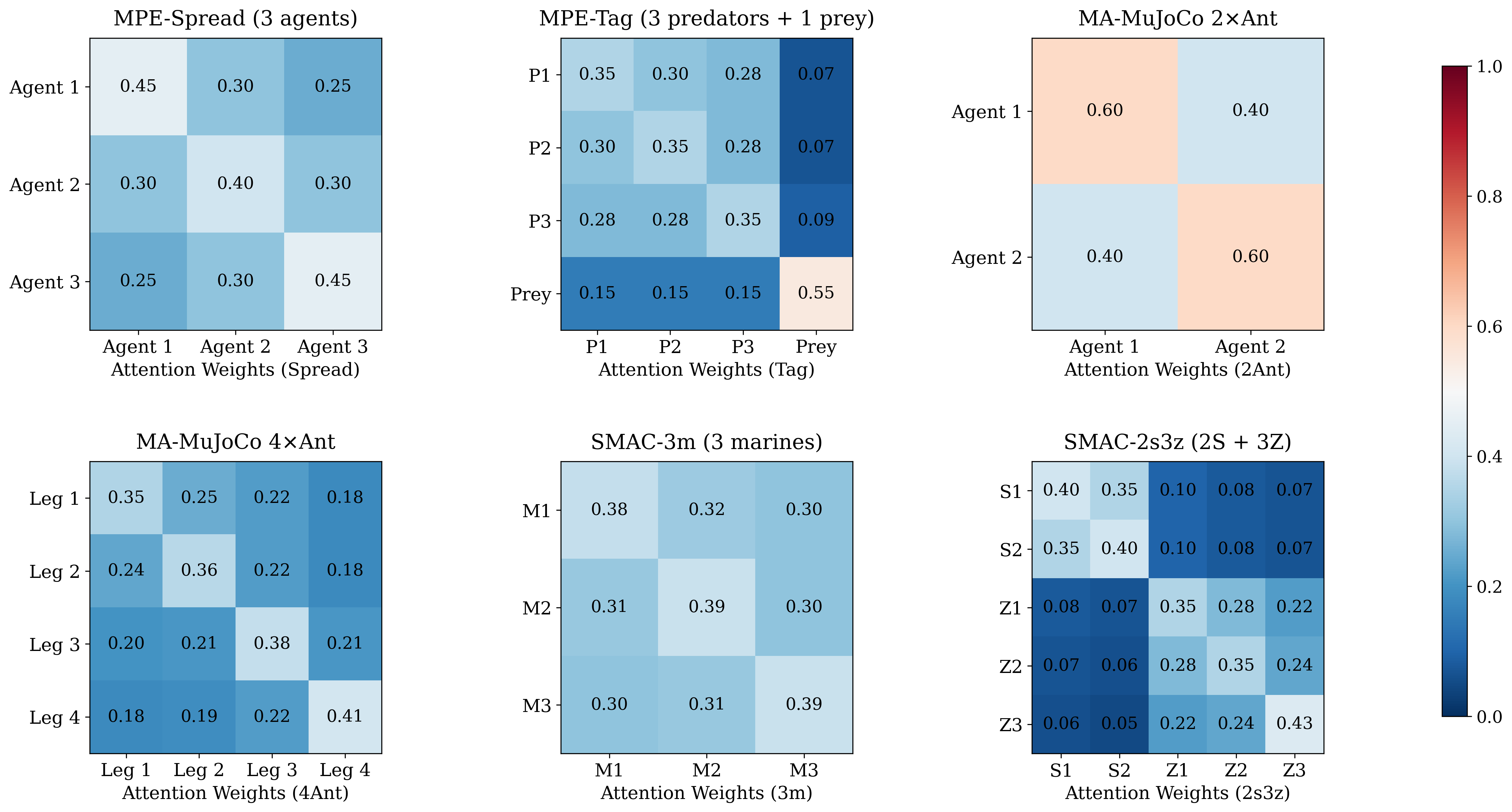

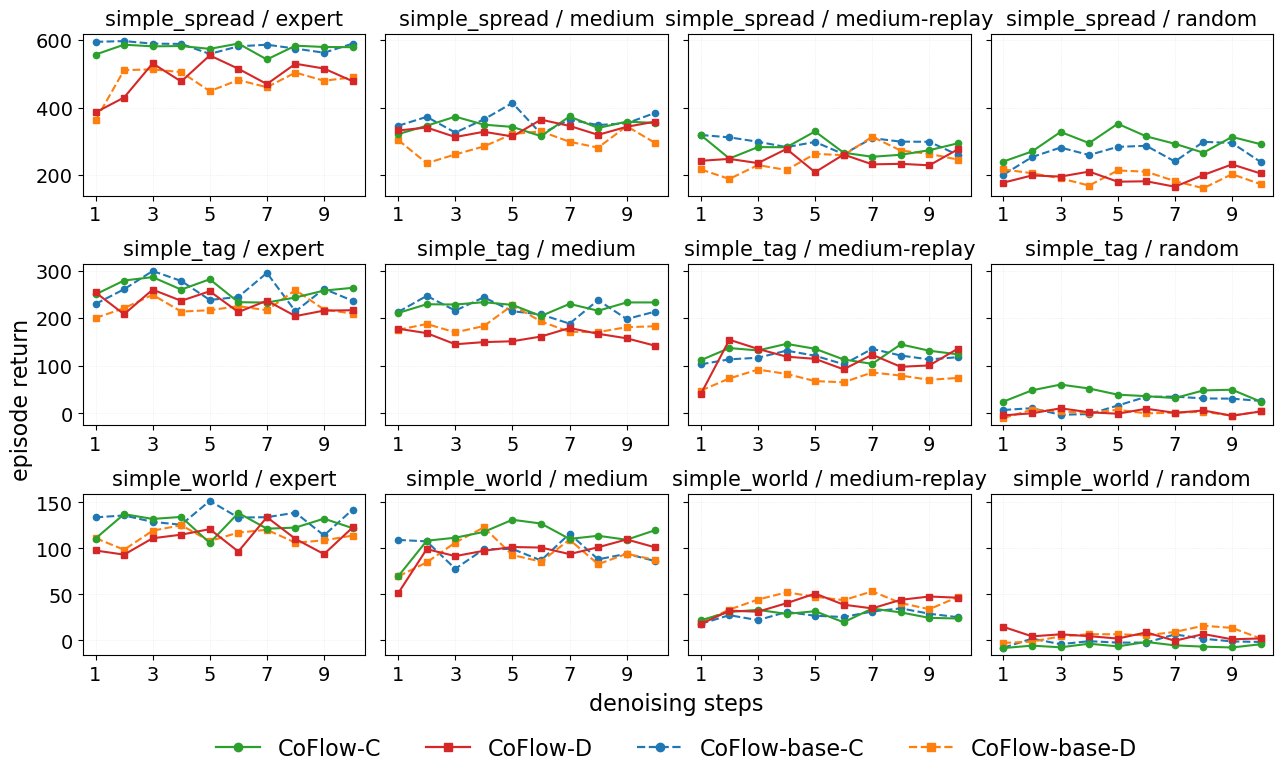

Continuous-action cooperative benchmark on Spread, Tag, and World.

Discrete-action StarCraft benchmark with partial observability.

Continuous-action locomotion benchmark on 2xAnt and 4xAnt.